Last month we launched a new capability to detect AI usage in SaaS applications. What we found was striking.

In just 30 days, Obsidian Security observed 69,749 interactions between users and corporate data with SaaS embedded AI features, most of which would have gone unnoticed without in-browser detection.

As organizations race to adopt the latest AI features to boost productivity, you cannot afford to play catch up. Your legal and compliance teams are now turning to security to answer a question you may not yet be able to answer: are your AI-enabled SaaS vendors accessing data outside what was authorized in data processing agreements?

Without clear visibility into how shadow AI models interact with enterprise data, you risk unknowingly exposing sensitive IP and customer information to third parties, often through tools you and your users already trust.

When you think of AI, you probably picture standalone products like ChatGPT or Glean—tools your teams deliberately chose, evaluated, and connected to business data—that automate workflows and boost productivity using built-in AI models.

But these third-party tools are not the only places where AI is interacting with enterprise data and where exposure lives. Traditional SaaS platforms, from Atlassian to Twilio, Zendesk to Airtable, are rapidly and quietly embedding AI features directly into applications your teams already use every day.

This shift fundamentally changes how data is accessed, processed, and retained. Yet unless you are constantly reviewing product updates and release notes, it may take months before you realize that sensitive data is being sent to an external AI model—one that wasn’t part of your original vendor review.

Because SaaS is controlled by end users, new AI features can often be enabled without triggering security reviews or approval workflows. And unlike the AI tool your teams intentionally adopted, embedded AI arrives pre-installed in applications that already passed your last security review.

Consider what this looks like in practice:

Each of these scenarios represents a real, active transfer of enterprise data to an AI model that may not have been part of any risk assessment. More importantly, many of these capabilities are shipped without mature configuration controls or robust logging APIs, leaving you with limited ability to govern or monitor their use, after the fact.

While productivity is real with these features, so are the contractual or compliance consequences. Your organization likely has explicit policies prohibiting AI models from processing or training on certain categories of data. In some cases, these restrictions are explicitly defined in customer agreements or MSAs.

These restrictions don’t disappear because a vendor quietly shipped a new feature. And relying on manual reviews, vendor assurances, or user reporting is simply not fast enough to catch this activity before it becomes a compliance problem. You need stronger controls and real-time visibility into how AI features are interacting with their data.

Your Third-Party Risk Management (TPRM) processes were built to enforce security and compliance policies at the time of procurement. When a new SaaS tool is adopted, it typically undergoes an extensive review. Your team evaluated the risks, decides what data the system can access, and documents the outcome to ensure it does not introduce unacceptable risk to the business.

With standalone AI tools, this pattern holds. Your teams intentionally select the product, evaluate the risks, and set boundaries

Once a SaaS application passes its initial review, those security assumptions often remain unchanged for years. But when vendors later introduce new AI capabilities, the same level of scrutiny rarely happens, even though the potential risks can be just as significant.

The result: AI features gain access to sensitive data, generate outputs based on proprietary information, or perform automated actions inside platforms that you’ve already “approved” and stopped watching.

Other approaches in the market attempt to spot when users deploy AI by scanning OAuth permissions, reviewing app configurations, or flagging AI tools at the network layer. These approaches can identify that an AI tool exists, but they can't tell you when an AI feature inside an already-approved application is being invoked.

That level of visibility requires monitoring at the point of interaction: the browser.

Obsidian Security helps you detect and manage these emerging risks, enabling safe and responsible use of AI across your business.

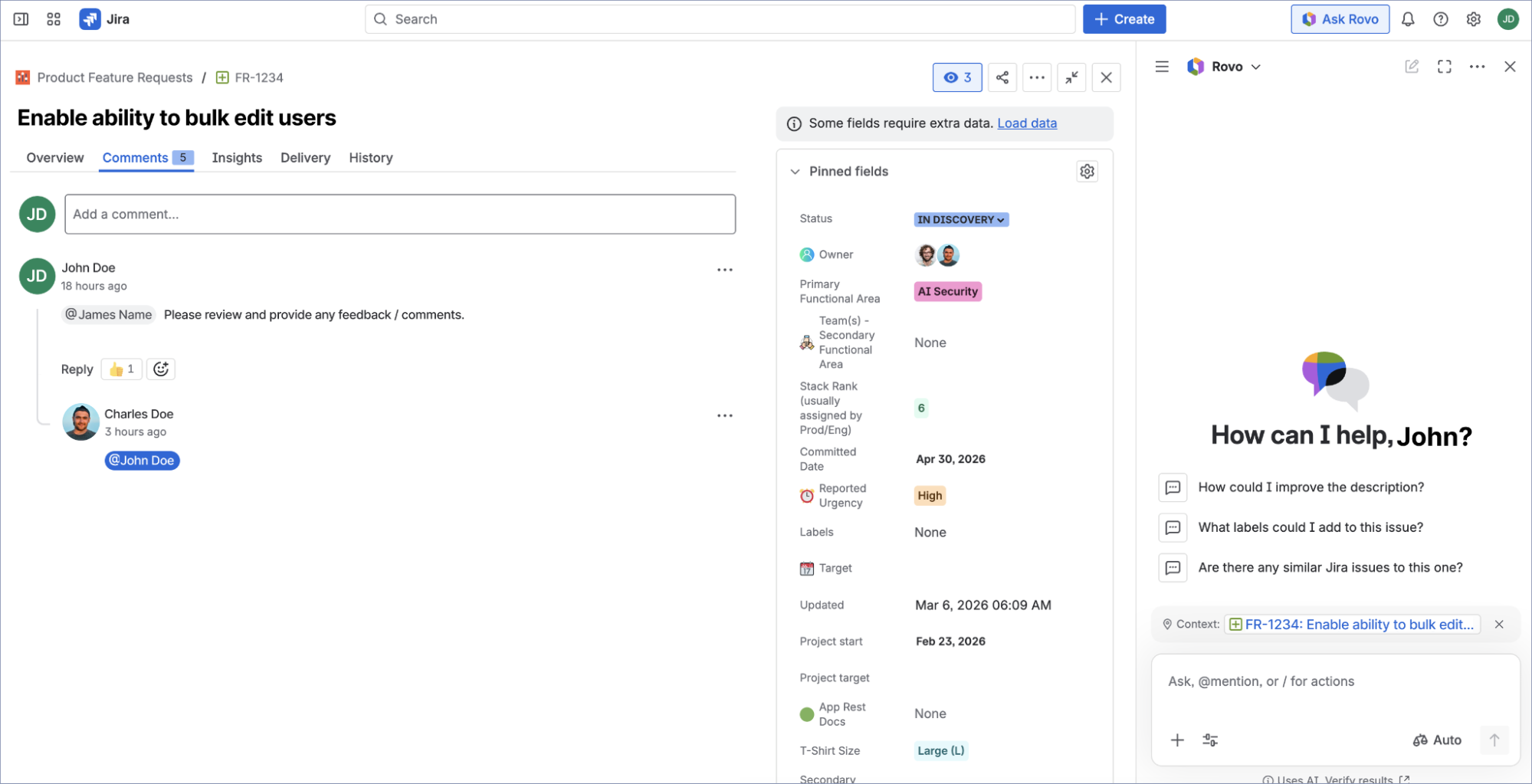

By deploying the Obsidian Security Browser Extension, you gain visibility at the exact point where users engage with SaaS. The browser is where AI features are enabled, where your data is entered, and where AI outputs are generated. And it’s the only place where you can observe what’s actually happening, rather than infer it from logs or configurations.

Monitoring these interactions with Obsidian allows you to detect when AI features are used inside popular applications, and surface that activity directly to security teams. With Obsidian, you can:

With this insight, you can govern SaaS applications that embed AI capabilities with the same rigor as net-new tool adoption. Meaning you can implement evidence-based controls for the users enabling them, the data they access, and the integrations they rely on.

Visibility into AI feature activity is the foundation, but it's only the beginning. As your SaaS vendors continue to embed AI capabilities at an accelerating pace, your exposure will keep expanding whether or not your policies do.

The organizations staying ahead of this challenge aren't just detecting AI activity after the fact. They're building the governance layer that lets them define which AI features are permitted, on which data, for which users, and enforce those policies continuously, not just at the point of procurement.

Because in a world where your SaaS vendor can turn on a new AI model next Tuesday, point-in-time reviews are a policy fiction. Real governance has to be continuous.

Start in minutes and secure your critical SaaS applications with continuous monitoring and data-driven insights.